Cadmus

Cadmus is a document reader for Kobo’s e-readers.

Documentation

This site is the primary source of documentation for Cadmus. Use the sidebar to navigate, or start at the overview for installation, usage, and workflows.

Supported firmwares

Any 4.X.Y firmware, with X ≥ 6, will do.

Supported devices

- Libra Colour.

- Clara Colour.

- Clara BW.

- Elipsa 2E.

- Clara 2E.

- Libra 2.

- Sage.

- Elipsa.

- Nia.

- Libra H₂O.

- Forma.

- Clara HD.

- Aura H₂O Edition 2.

- Aura Edition 2.

- Aura ONE.

- Glo HD.

- Aura H₂O.

- Aura.

- Glo.

- Touch C.

- Touch B.

Supported formats

Features

- Crop the margins.

- Continuous fit-to-width zoom mode with line preserving cuts.

- Rotate the screen (portrait ↔ landscape).

- Adjust the contrast.

- Define words using dictd dictionaries.

- Annotations, highlights and bookmarks.

- Retrieve articles from online sources through hooks.

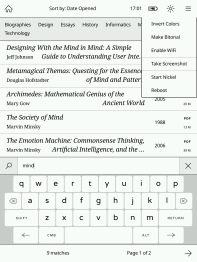

Screenshots

Acknowledgments

Cadmus is a fork of Plato, a document reader created by Bastien Dejean.

Installation

Cadmus comes in different packages. Pick the one that matches your needs.

Available packages

| Package | What’s included | Installs to |

|---|---|---|

KoboRoot.tgz | Cadmus only | /mnt/onboard/.adds/cadmus |

KoboRoot-nm.tgz | Cadmus + NickelMenu | /mnt/onboard/.adds/cadmus |

KoboRoot-test.tgz | Test build only | /mnt/onboard/.adds/cadmus-tst |

KoboRoot-nm-test.tgz | Test build + NickelMenu | /mnt/onboard/.adds/cadmus-tst |

Which one should I pick?

- Normal installs: Use

KoboRoot.tgzorKoboRoot-nm.tgz - If you use NickelMenu: Pick a package that includes it (

-nmversions) - Testing a new feature: Use test packages (

-testversions) for trying out changes that haven’t been released yet

First-time setup

- Go to the latest release.

- Download the package you want from the table above.

- Connect your Kobo to your computer via USB.

- Rename the downloaded file to

KoboRoot.tgz. - Copy that renamed file to

/mnt/onboard/.kobo/KoboRoot.tgzon the device. - Eject the device and reboot.

Note

You must rename the file to

KoboRoot.tgzbefore copying it to your Kobo. For example,KoboRoot-nm.tgzandKoboRoot-test.tgzwill not install until you rename them.

Updating

There are two ways to update Cadmus once it’s installed.

Wirelessly (OTA)

The easiest way — no computer needed, just WiFi. Open Main Menu → Check for Updates and follow the prompts. See OTA updates for details.

Via USB

You can also update by copying a new package over USB, the same way you did the first-time install.

- Connect your Kobo to your computer via USB.

- When Cadmus asks “Share storage via USB?”, tap Share.

- Download the package you want from the latest release.

- Copy it to

/mnt/onboard/.kobo/KoboRoot.tgzon your Kobo. - Eject and disconnect the USB cable.

Note

Always name the file

KoboRoot.tgzon the device, regardless of which package you downloaded (e.g.KoboRoot-nm.tgzmust be renamed).

Cadmus detects the file automatically and reboots your Kobo to install the update. You don’t need to do anything else.

Uninstalling

See Uninstalling Cadmus.

Uninstalling Cadmus

To remove Cadmus from your Kobo:

-

Connect your Kobo to your computer via USB.

-

Delete the Cadmus folder from

.adds:Build Folder to delete Stable /mnt/onboard/.adds/cadmusTest /mnt/onboard/.adds/cadmus-tst -

If you installed a package that included NickelMenu, delete the Cadmus menu entry too:

Build NickelMenu entry to delete Stable /mnt/onboard/.adds/nm/cadmusTest /mnt/onboard/.adds/nm/cadmus-tst -

Eject the device and disconnect the USB cable.

Note

If you no longer need NickelMenu at all, you can remove it separately.

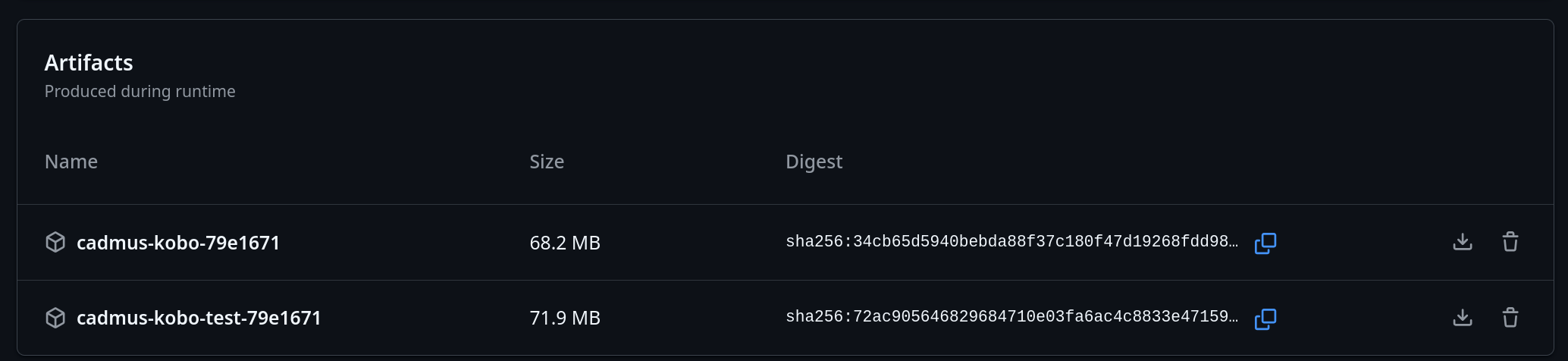

Test builds

First-time install

- Open the Cadmus GitHub Actions page.

- Select the run for the change you want to test.

- Download the

cadmus-kobo-test-<suffix>file.

- Extract it and pick the package that matches your setup.

- Rename the selected file to

KoboRoot.tgz. - Copy that renamed file to:

/mnt/onboard/.kobo/KoboRoot.tgz - Eject the device and reboot.

Note

Test packages such as

KoboRoot-test.tgzandKoboRoot-nm-test.tgzmust be renamed toKoboRoot.tgzbefore you copy them to your Kobo.

Updating an existing test build

Use the OTA feature to download updates from a PR number directly on your device. This lets you test changes without connecting to a computer.

OTA updates

Once Cadmus is installed, you can update it wirelessly without connecting to a computer. The OTA (Over-The-Air) feature downloads updates directly from GitHub.

What you need

- A WiFi connection

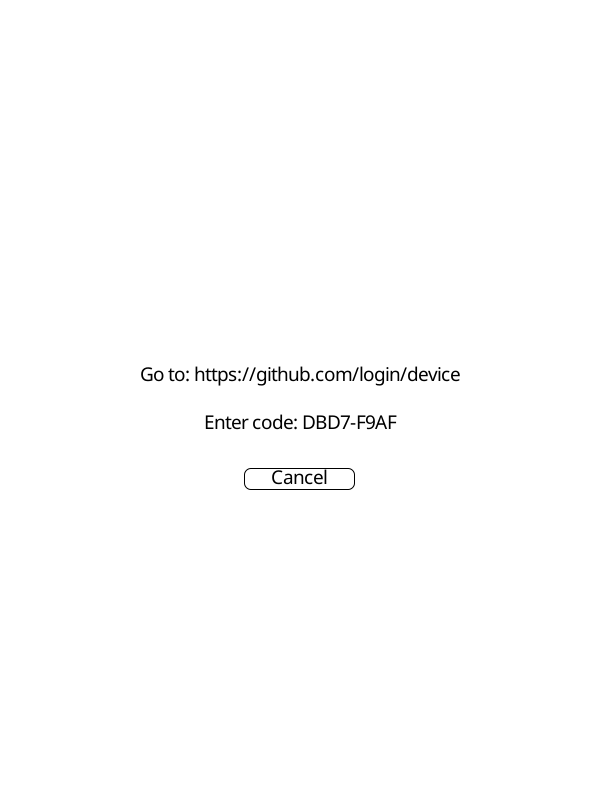

Authentication

Main branch and PR builds require a GitHub account. Stable releases are public and need no authentication.

The first time you request a main branch or PR build, Cadmus will show a screen with a URL and a short code:

- Go to the URL shown on screen

- Enter the code shown on your device

- Sign in to GitHub and approve the request

Cadmus detects the approval automatically and starts the download. The token is saved to disk so you won’t need to sign in again.

|  |

How to update

Open Main Menu → Check for Updates. You’ll see options for where to get the update from:

| Source | Description |

|---|---|

| Stable Release | Latest official release from GitHub |

| Main Branch | Latest development build (most recent changes) |

| PR Build | Test a specific pull request |

Note

The Stable Release option is not shown in test builds.

Updating from the main branch

Select Main Branch to get the most recent development build. This includes changes that have been merged but not yet released officially.

If you haven’t authenticated before, Cadmus will guide you through the GitHub sign-in process. See Authentication for details.

The update downloads from GitHub, installs automatically, and reboots the device to finish.

Before that reboot, Cadmus removes the files it previously installed so the new package can replace them cleanly. Your custom fonts, icons, and other user-added files will be preserved.

Testing a pull request

Select PR Build to try out a specific change before it’s released. Enter the PR number when prompted. If you haven’t authenticated before, Cadmus will guide you through the GitHub sign-in process. See Authentication for details.

Tip

Find the PR number in the GitHub URL. For example, in

github.com/OGKevin/cadmus/pull/42the PR number is 42.

Normal vs test builds

OTA works for both types of builds. The type you’re currently using determines what gets downloaded:

- Normal builds update to

KoboRoot.tgzin/mnt/onboard/.adds/cadmus - Test builds update to

KoboRoot-test.tgzin/mnt/onboard/.adds/cadmus-tst

See the available packages table for all options.

First-time setup

OTA only works for updating an existing installation. To install Cadmus for the first time, follow the installation guide or the test builds guide to copy a KoboRoot file via USB.

Troubleshooting

“Insufficient disk space” error

If Cadmus shows an error like “Insufficient disk space: need 100MB, have XMB” while downloading an update:

- Free up space on your Kobo by deleting books or other files you do not need

- Cadmus downloads update files into its own

tmpfolder inside the Cadmus folder - This error means internal storage is too full for the update file

Migrating from Plato

Cadmus is a fork of Plato and uses the same Settings.toml format, so

migrating is mostly a matter of copying your settings file across.

Copy your settings

| Build | Plato settings | Cadmus settings |

|---|---|---|

| Stable | /mnt/onboard/.adds/plato/Settings.toml | /mnt/onboard/.adds/cadmus/Settings.toml |

| Test | /mnt/onboard/.adds/plato/Settings.toml | /mnt/onboard/.adds/cadmus-tst/Settings.toml |

Copy the file as-is into the Cadmus folder so it is named Settings.toml (for example, /mnt/onboard/.adds/cadmus/Settings.toml or /mnt/onboard/.adds/cadmus-tst/Settings.toml).

The [[libraries]] section is the most important part, it tells Cadmus where your books live and drives the reading-progress import on

first launch. On first launch, Cadmus will move this file into its Settings/ folder automatically.

[[libraries]]

name = "On Board"

path = "/mnt/onboard"

mode = "database"

Important

Make sure each

[[libraries]]entry has the correctpathandname. If a path doesn’t match what’s on disk, Cadmus skips that library’s import.

What happens on first launch

When Cadmus starts for the first time it automatically imports your data from each library listed in settings:

| Source | What’s imported |

|---|---|

.metadata.json | Book metadata (title, author, …) and reading progress |

.reading-states/<fp>.json | Reading progress for books not already covered by the above |

Both database mode and filesystem mode libraries are handled. Cadmus reads

.reading-states/ in all cases, so current page, bookmarks, and annotations

carry over regardless of which mode you used in Plato.

Note

After a successful import the original files are renamed:

.metadata.json→.metadata.json.imported.reading-states/→.reading-states.importedThese renamed files are just a safety backup. Once you’ve confirmed everything looks right you can delete them.

Note

Cadmus also removes the

.thumbnail-previews/folder and regenerates thumbnails itself.

Re-running the import

If the import went wrong (for example, the library path was incorrect in settings), you can start it fresh:

-

Rename

.metadata.json.importedback to.metadata.jsonand.reading-states.importedback to.reading-states/in each library directory. -

Delete the Cadmus SQLite database:

Build Database path Stable /mnt/onboard/.adds/cadmus/cadmus.sqliteTest /mnt/onboard/.adds/cadmus-tst/cadmus.sqlite -

Restart Cadmus — the import will run again from scratch.

If something still looks wrong after re-running, check the logs for details. See Troubleshooting for where to find them.

Settings

Cadmus reads settings from Settings/Settings-*.toml.

Settings can be changed via Main Menu → Settings, which opens the built-in settings editor.

Legend:

- ✏️ Editable in the settings editor

- 🔑 Required for feature to work

- 🧪 Only available in test builds

- 📱 Kobo

General Settings

keyboard-layout

✏️

Keyboard layout to use for text input.

- Possible values:

"English","Russian".

keyboard-layout = "English"

sleep-cover

✏️

Handle the magnetic sleep cover event.

sleep-cover = true

auto-share

✏️

Automatically enter shared mode when connected to a computer, skipping the “Share storage via USB?” prompt.

Tip

Turn this on if you update Cadmus via USB often — you won’t have to confirm the sharing dialog each time you plug in.

auto-share = false

auto-suspend

✏️

Number of minutes of inactivity after which the device will automatically go to sleep.

- Zero means never.

auto-suspend = 30.0

auto-power-off

✏️

Delay in days after which a suspended device will power off.

- Zero means never.

auto-power-off = 3.0

button-scheme

✏️

Defines how the back and forward buttons are mapped to page forward and page backward actions.

- Possible values:

"natural","inverted".

button-scheme = "natural"

locale

✏️

The preferred language for the user interface, using BCP 47 format (e.g., "en-US", "de-DE").

This setting is optional. When not set, en-GB is used.

locale = "en-GB"

Reader

Settings that control the reading experience.

reader.finished

✏️

What to do when you finish reading a book.

Possible values:

"notify"(show a notification)"close"(close the book and go back)"go-to-next"(open the next book in the library).

[reader]

finished = "close"

Libraries

✏️

Document library configuration. Each library has a name, path, and mode.

[[libraries]]

name = "On Board"

path = "/mnt/onboard"

mode = "database"

libraries.name

✏️

Display name for the library.

libraries.path

✏️

Directory path containing documents.

libraries.mode

✏️

Library indexing mode.

- Possible values:

"database","filesystem".

libraries.finished

✏️

Override the reader.finished setting for this specific library.

When set, this takes precedence over the global reader setting.

Possible values:

"notify""close""go-to-next".- Leave unset to inherit the global

reader.finishedsetting.

[[libraries]]

name = "KePub"

path = "/mnt/onboard/.kobo/kepub"

finished = "go-to-next"

Intermissions

✏️

Defines the images displayed when entering an intermission state.

[intermissions]

suspend = "logo:"

power-off = "logo:"

share = "logo:"

intermissions.suspend

✏️

Image displayed when the device enters sleep mode.

Setting this to "calendar:" also enables the calendar refresh: every 5

minutes, the device wakes, shows the calendar, and then goes back to sleep

automatically.

- Possible values:

"logo:"(built-in logo),"cover:"(current book cover),"calendar:"(built-in calendar), or a path to a custom image file.

intermissions.power-off

✏️

Image displayed when the device powers off.

- Possible values:

"logo:"(built-in logo),"cover:"(current book cover), or a path to a custom image file.

intermissions.share

✏️

Image displayed when entering USB sharing mode.

- Possible values:

"logo:"(built-in logo),"cover:"(current book cover), or a path to a custom image file.

Import

These settings control how Cadmus imports documents from your device. They are available in the Settings → Import menu.

import.startup-trigger

✏️

Automatically import new books when Cadmus starts.

[import]

startup-trigger = true

Tip

If this is turned off, you can still trigger an import manually from the home screen: tap the database icon (bottom-left corner) and choose Import.

import.sync-metadata

✏️

Re-extract metadata (title, author, etc.) whenever a document changes.

[import]

sync-metadata = true

import.metadata-kinds

File extensions of documents whose metadata is extracted during import.

[import]

metadata-kinds = ["epub", "pdf", "djvu"]

import.allowed-kinds

File extensions of documents considered during the import process.

[import]

allowed-kinds = ["djvu", "xps", "fb2", "txt", "pdf", "oxps", "cbz", "epub"]

OTA

The OTA feature downloads builds from GitHub.

Authentication for main branch and PR builds uses GitHub device auth flow.

When you select a build that requires authentication,

Cadmus will display a short code and a URL. Visit

github.com/login/device on any device, enter the code, and Cadmus will

automatically continue the download once you authorize.

The token is saved to disk after the first authorization so you will not be prompted again on subsequent downloads.

For step-by-step instructions with screenshots, see the OTA updates guide.

Telemetry

Cadmus writes JSON logs to disk. When the build enables the tracing feature, it

can also export logs to an OpenTelemetry endpoint.

These settings are available in the Settings → Telemetry menu.

Important

Changes to these settings only take effect after restarting Cadmus. The application initializes telemetry on startup.

logging

[logging]

enabled = true

level = "info"

max-files = 3

directory = "logs"

# otlp-endpoint = "https://otel.example.com:4318"

logging.enabled

✏️

Enable or disable structured JSON logging.

[logging]

enabled = true

logging.level

✏️

Minimum log level to record.

- Possible values:

"trace","debug","info","warn","error".

[logging]

level = "info"

logging.max-files

Number of log files to keep. Only the most recent N files are kept — older ones are deleted automatically when Cadmus starts.

- Default:

3 - Set to

0to keep all log files.

[logging]

max-files = 3

logging.otlp-endpoint

✏️ (only when the tracing feature is enabled)

Optional OTLP endpoint for exporting logs to an OpenTelemetry collector.

[logging]

otlp-endpoint = "https://otel.example.com:4318"

Environment override:

OTEL_EXPORTER_OTLP_ENDPOINTtakes precedence overlogging.otlp-endpoint.

logging.pyroscope-endpoint

✏️ (only when the profiling feature is enabled)

Optional Pyroscope server URL for continuous profiling. When set, Cadmus starts both a heap profiling agent (via jemalloc) and a CPU profiling agent (via pprof) that push profiles to this endpoint.

[logging]

pyroscope-endpoint = "http://localhost:4040"

Environment override:

PYROSCOPE_SERVER_URLtakes precedence overlogging.pyroscope-endpoint.

logging.enable-kern-log

🧪 📱 ✏️

Captures kernel logs via logread -F and forwards them to structured logging

with the target cadmus_core::logging:kern.

[logging]

enable-kern-log = false

logging.enable-dbus-log

🧪 📱 ✏️

Captures D-Bus signals via the built-in zbus-based DbusMonitorTask and forwards them to structured logging.

[logging]

enable-dbus-log = false

Settings Retention

Cadmus stores each version’s settings in a separate file in the Settings/ directory (for example, Settings-v1.2.3.toml).

This ensures backward and forward compatibility when you upgrade.

settings-retention

Number of recent version settings files to keep. Only the most recent N version files are kept. When a new version is saved, older versions beyond this limit are deleted automatically.

- Default:

3 - Set to

0to keep all version files

settings-retention = 3

User Interface

This section explains the different parts of Cadmus you interact with while reading and managing your books.

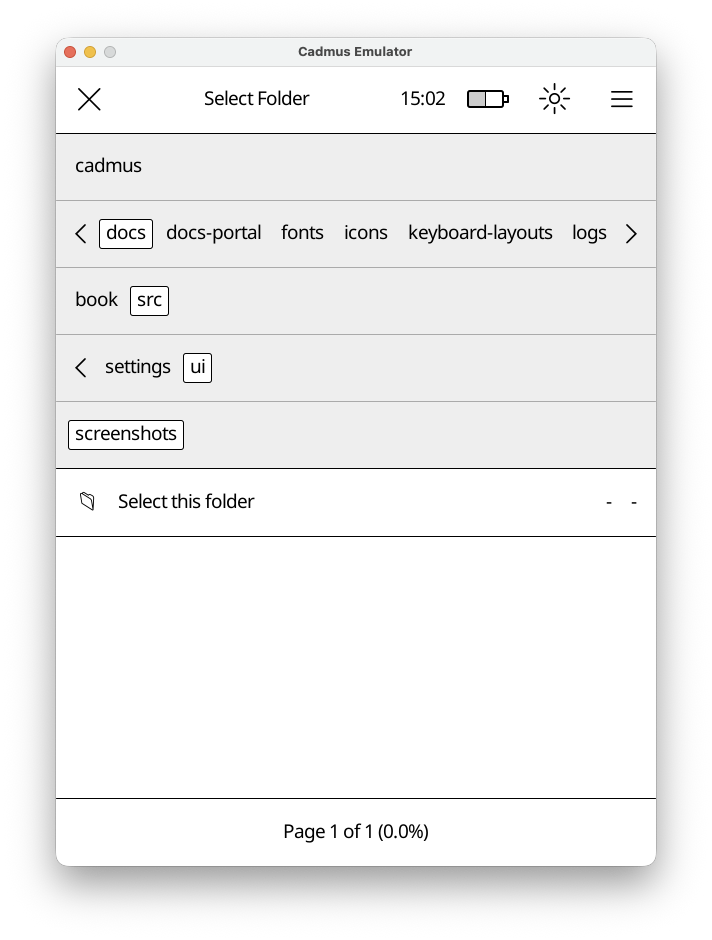

File Chooser

The file chooser helps you pick files or folders. It appears when you need to select a file or folder. e.g to pick a screen saver.

How It Works

The file chooser has three main parts:

- Top Bar - Shows what you’re selecting (“Select File”, “Select Folder”, etc.) and a close button

- Navigation Bar - Shows folders you can tap to browse deeper

- File List - Shows files in the current folder (or a special “Select this folder” option)

Navigation

Tap any folder name in the navigation bar to open it. The bar expands automatically if there are many folders. Tap and drag the separator line below the folders to resize the navigation area.

Selection Modes

The file chooser adapts based on what you need:

- File selection mode

- Folder selection mode

- File or folder selection mode

The title will indicate the mode.

Settings

The settings editor lets you change how Cadmus works. Open it from Main Menu → Settings.

Settings are organised into tabs — tap a category to open it.

Categories

- General — language, sleep, auto-suspend, button layout

- Reader — what happens when you finish a book

- Libraries — add, edit, or remove your book libraries

- Dictionaries — download and manage offline dictionaries

- Import — control how new books are picked up automatically

- OTA — download Cadmus updates directly to your Kobo

- Telemetry — logging options

Dictionaries

Cadmus supports offline word definitions. You can look up any word while reading by long-pressing it. Dictionaries are stored on your device and work without an internet connection once downloaded.

Cadmus integrates with reader-dict, an open-source project that provides high-quality monolingual dictionaries (where you look up a word and get a definition in the same language) for many languages.

Opening the Dictionaries Tab

Go to Main Menu → Settings → Dictionaries.

You will see a list of available languages. Each row shows the language code and its current status.

Statuses

| Status | What it means |

|---|---|

| Download | Not yet on your device — tap to download |

| Downloading | A download is in progress |

| Installed | Ready to use |

| Update Available | A newer version is available |

Downloading a Dictionary

Important

Your device must be connected to Wi-Fi before you can download a dictionary.

- Open Main Menu → Settings → Dictionaries.

- Find the language you want.

- Tap Download next to it.

A progress notification appears at the top of the screen while the file downloads. Once the download finishes, Cadmus begins indexing the dictionary automatically.

Updating a Dictionary

When a newer version is available the status shows Update Available.

- Tap the language row.

- Select Update from the menu.

The updated dictionary replaces the old one automatically.

Re-downloading a Dictionary

If a dictionary is already installed you can re-download it to get a fresh copy:

- Tap the language row.

- Select Re-download from the menu.

Deleting a Dictionary

- Tap the language row.

- Select Delete from the menu.

The dictionary files are removed from your device.

How Indexing Works

After you download, update, or re-download a dictionary, Cadmus needs to index it before you can look up words. Indexing reads every word in the dictionary and stores it in a database on disk so that lookups are fast without loading the entire dictionary into memory. This is especially important on devices with limited memory.

A notification with a progress bar appears at the top of the screen while indexing is in progress.

Note

You can keep reading while indexing runs in the background. Words that have already been indexed are available for lookup right away, so you may get partial results until indexing finishes.

What happens when you restart your Kobo

If your Kobo restarts or shuts down while indexing is still running, Cadmus picks up where it left off the next time it starts. It does not start over from the beginning.

When does re-indexing happen

Cadmus automatically re-indexes a dictionary when you:

- Update it to a newer version

- Re-download it

- Delete it (the old index is removed)

You do not need to trigger indexing yourself — it happens automatically whenever the dictionary files change.

Where Dictionaries are Stored

Downloaded dictionaries live in the dictionaries/reader-dict/<lang>/

folder on your device. Each language gets its own subfolder containing a

.dict.dz (or .dict) and a .index file.

Library

The library is where all your books live. Cadmus scans your device’s storage and keeps track of everything you’ve added, so you can browse, search, and organize your collection right on the device.

What’s in this section

- Importing books — how Cadmus finds and adds books to your library

Importing Books

Cadmus scans your device’s storage and adds books to its database automatically. This process is called importing.

Import on startup

By default, Cadmus imports any new books it finds every time it starts. Copy files to your device, restart the app, and they’ll appear in your library right away.

Tip

If you have a large library, consider turning off import on startup. Scanning a lot of files takes time and drains the battery. You can still import manually whenever you need to.

To disable it, open Settings and set

import.startup-triggertofalse.

Manual import

You can trigger an import at any time without restarting:

- On the home screen, tap the library name in the bottom-left corner.

- In the menu that appears, tap Database → Import.

Cadmus will scan your library folder and add any books it hasn’t seen before.

Troubleshooting

Logs

When something isn’t working right, logs will help with figuring out what went wrong. If you’re reporting an issue, sharing your logs makes it much easier to debug.

Where to find Cadmus logs

Cadmus saves logs in a logs folder. Here’s where to find it on each platform:

| Platform | Stable build | Test build |

|---|---|---|

| Kobo | /mnt/onboard/.adds/cadmus/logs | /mnt/onboard/.adds/cadmus-tst/logs |

Each time you start Cadmus, it creates a new log file with a unique ID. By

default, only the 3 most recent log files are kept — older ones are deleted

automatically. You can change this with the

logging.max-files setting.

The log files look like this:

cadmus-019cf7e3-ef3a-7752-846f-83b92ac90634.json

Finding your run ID

Every time Cadmus starts, it prints a run ID to help you identify which log file belongs to that session. You can find this in:

-

info.log - The startup log in the Cadmus folder. Look for the line that says

Cadmus run started with ID:followed by a string of letters and numbers.For example:

Cadmus run started with ID: 019cf7e3-ef3a-7752-846f-83b92ac90634 (version 0.10.0)Copy only the UUID part — the string of letters and numbers between

ID:and the(versiontext. -

Console output - If you’re running Cadmus from a terminal, the same run ID is printed when it starts.

Kernel logs

Kernel logs can be useful to debug lower level system issues, for example a kernel panic, which triggers a device reboot.

Kernel logs are only available in test builds. If you’re using a test build and want to include kernel logs:

- Open Main Menu → Settings

- Go to

Telemetry - Enable kernel logs

- Restart Cadmus

Kernel logs will then be saved in the same log file as your Cadmus logs.

Note

Kernel logs will use more disk space, so don’t forget to turn it back off.

Crashloop recovery

If Cadmus crashes 3 times in a row, it will exit back to Nickel instead of restarting. This prevents the device from getting stuck in an infinite loop of crashes.

When this happens:

- Check

info.login the Cadmus folder for the panic error - The crash counter resets when you start Cadmus manually (using the restart option in the menu or rebooting)

Development Environment Setup

Cadmus uses devenv with Nix to provide a reproducible development environment. This guide covers setup on both Linux and macOS.

Prerequisites

- Install Nix with flakes enabled. The easiest way is using the Determinate Nix Installer.

- Install devenv.

Quick Start

-

Clone the repository and enter the devenv shell:

git clone https://github.com/OGKevin/cadmus.git cd cadmus devenv shell -

Run the one-time setup to build native dependencies:

cargo xtask setup-native -

Download the packaged runtime assets used by Kobo builds:

cargo xtask download-assetsNote

cadmus-coregenerates some compile-time metadata from the bundled asset directories. For Kobo builds, make surebin/,resources/, andhyphenation-patterns/are present beforecargo xtask build-koboso the generated asset list is complete. -

Run the emulator:

cargo xtask run-emulator

Available Commands

Once inside the devenv shell, these commands are available:

| Command | Description |

|---|---|

cargo xtask setup-native | Build MuPDF for native development (run once) |

cargo xtask download-assets | Download packaged Plato runtime assets |

cargo xtask test | Run the test suite across the feature matrix |

cargo xtask run-emulator | Run the emulator |

cargo xtask build-kobo | Cross-compile for Kobo device |

cargo xtask dist | Assemble the Kobo distribution directory |

cargo xtask bundle | Package KoboRoot.tgz for installation |

cadmus-dev-otel | Run emulator with tracing and profiling enabled |

devenv up | Start observability stack (Grafana, Tempo, Loki) |

cargo xtask docs | Build documentation portal (mdBook + Cargo docs) |

cadmus-docs-serve | Serve documentation portal locally on port 1111 |

cadmus-translate | Generate the docs translation template (.pot) |

Run cargo xtask --help to see all available subcommands, or cargo xtask <cmd> --help for

options on a specific command.

Or have a look at the rustdocs for xtask here.

Tasks

The devenv environment uses tasks to manage build dependencies.

Tasks are defined in devenv.nix and can be run with devenv tasks run <task>.

Available Tasks

| Task | Description | Dependencies |

|---|---|---|

docs:build | Build documentation EPUB (only rebuilds if files changed) | None |

deps:native | Build MuPDF and wrapper for native development | None |

build:kobo | Build for Kobo device | docs:build |

All tasks delegate to cargo xtask under the hood.

How Tasks Work

Tasks with dependencies automatically run their dependencies first. For example:

# This will first run docs:build (if needed), then build for Kobo

devenv tasks run build:kobo

The docs:build task uses execIfModified to only rebuild when documentation files have actually changed.

Kobo Build Notes

cargo xtask download-assetsmust run beforecargo xtask build-kobo.- OTA updates delete Cadmus-owned bundled files before reboot, then Kobo

extracts the new

KoboRoot.tgzover the install directory. - User files outside the generated Cadmus-owned asset list must be preserved.

Documentation Portal

Cadmus provides a unified documentation portal that combines user guides, API reference, and contribution guides in one place.

Building and Serving Locally

To build the documentation portal:

cargo xtask docs

This runs the full build pipeline:

- Builds the mdBook user guide (

docs/book/html/) - Generates Rust API documentation (

target/doc/) - Builds the Zola landing page and integrates all documentation

To serve the portal locally with live reload:

cadmus-docs-serve

The portal will be available at http://localhost:1111 with automatic rebuilds when you change documentation files or Rust code.

Documentation Structure

The portal provides three integrated sections:

- Landing Page (

/) - Overview and feature highlights - User Guide (

/guide/) - User-facing documentation from mdBook - API Reference (

/api/) - Auto-generated Rust API documentation

All three sections are deployed as a single artifact to GitHub Pages at https://ogkevin.github.io/cadmus/.

Continuous Integration

Documentation is automatically built and validated on every pull request and deployed on push

to main or master. The CI pipeline checks:

- mdBook documentation compiles

- Rust code documentation is valid

- Zola landing page builds successfully

Running Tests

Tests require the TEST_ROOT_DIR environment variable to be set. The easiest way to run the

full test matrix is:

cargo xtask test

This sets TEST_ROOT_DIR automatically and runs tests across all feature combinations. To run

a single feature combination:

cargo xtask test --features "emulator + test"

Or to run tests manually without xtask:

TEST_ROOT_DIR=$(pwd) cargo test

TEST_ROOT_DIR is automatically configured in CI but must be set manually when running

cargo test directly.

Platform Support

Linux (Full Support)

Linux provides full development capabilities including:

- Native development (emulator, tests)

- Cross-compilation for Kobo devices using the Linaro ARM toolchain

- Git hooks (actionlint, shellcheck, shfmt, markdownlint, prettier)

The Linaro toolchain is automatically added to PATH and provides arm-linux-gnueabihf-* commands.

macOS (Full Support)

macOS supports full development capabilities including:

- Native development (emulator, tests)

- Cross-compilation for Kobo devices using the Linaro ARM toolchain

- Git hooks (actionlint, shellcheck, shfmt, markdownlint, prettier)

macOS-Specific Notes

MuPDF build: On macOS, the native setup script manually gathers pkg-config CFLAGS for system libraries because MuPDF’s build system doesn’t properly detect them on Darwin.

Observability Stack

The devenv includes a full observability stack for development:

# Start all services

devenv up

# In another terminal, run the instrumented emulator

cadmus-dev-otel

Services available after devenv up:

| Service | URL | Purpose |

|---|---|---|

| Grafana | http://localhost:3000 | Dashboards and exploration |

| Tempo | http://localhost:3200 | Distributed tracing |

| Loki | http://localhost:3100 | Log aggregation |

| Prometheus | http://localhost:9090 | Metrics |

| OTLP Collector | http://localhost:4318 | Telemetry ingestion |

| Pyroscope | http://localhost:4040 | Continuous profiling |

For more details on telemetry, see Telemetry.

Troubleshooting

Shell takes a long time to start

The first devenv shell invocation downloads and builds dependencies, which can take several

minutes. Subsequent invocations are cached and should be fast.

Tests fail with “TEST_ROOT_DIR must be set”

Set the environment variable before running tests:

TEST_ROOT_DIR=$(pwd) cargo test

Local Configuration

Create devenv.local.nix to override settings without modifying the tracked configuration:

{ pkgs, ... }:

{

env = {

# Example: Set TEST_ROOT_DIR automatically

TEST_ROOT_DIR = builtins.getEnv "PWD";

};

}

This file is gitignored and won’t affect other contributors.

Event System

Cadmus uses a tree-based event system where views are organized hierarchically and events flow through two distinct channels: the Hub and the Bus. Understanding the difference between these channels is essential for implementing correct event handling in views.

Tip

CSS is hard, this page might render better on 80% zoom on smaller screens. I tried to make the mermaid diagrams as big as possible to make them easier to read, which made them overflow a bit.

You might also want to hide the sidebar.

Overview

The UI is a tree of View objects. Each view can have children, forming a hierarchy like:

flowchart TD

Home["Home (root view)"]

TopBar["TopBar"]

Shelf["Shelf"]

Book1["Book"]

Book2["Book"]

BottomBar["BottomBar"]

Dialog["Dialog ← overlay child"]

Label["Label"]

Button["Button"]

Home --> TopBar

Home --> Shelf

Home --> BottomBar

Home --> Dialog

Shelf --> Book1

Shelf --> Book2

Dialog --> Label

Dialog --> Button

Events enter the tree from the main loop and travel top-down (root to leaves), with the highest z-level children checked first. Views can communicate back up the tree via the bus, or globally via the hub.

Hub vs Bus

The two channels serve fundamentally different purposes:

flowchart TB

subgraph MainLoop["Main Loop"]

rx["rx.recv()"]

match["match evt { ... }"]

handle["handle_event(root, &evt, &tx, &bus, ...)"]

Hub["Hub (tx)"]

Bus["Bus (VecDeque)"]

rx --> match

match -->|dispatches to view tree| handle

handle --> Hub

handle --> Bus

Bus -->|unhandled bus events forwarded to hub| Hub

end

Hub (Sender<Event>)

The hub is an mpsc::Sender<Event> — a global channel that sends events to the main loop.

Events sent to the hub are processed in the next iteration of the main loop, not immediately.

Use the hub when:

- The event needs to be handled by the main loop directly (e.g.,

Event::Close,Event::Open,Event::Notification) - The event should reach all views in a future dispatch cycle (e.g.,

Event::Focus) - You need to communicate across unrelated parts of the view tree

#![allow(unused)]

fn main() {

// Close a view — handled by the main loop's match statement

hub.send(Event::Close(self.view_id)).ok();

// Show a notification — main loop creates the Notification view

hub.send(Event::Notification(NotificationEvent::Show(msg))).ok();

// Set focus — dispatched to all views in the next loop iteration

hub.send(Event::Focus(Some(ViewId::SearchInput))).ok();

}Bus (VecDeque<Event>)

The bus is a local VecDeque<Event> that passes events from a child to its parent. Events

placed on the bus are handled synchronously during the current dispatch cycle.

Use the bus when:

- A child needs to communicate with its direct parent

- The parent is expected to handle the event (e.g., a button telling its parent dialog it was pressed)

- The event should bubble up through the view hierarchy

#![allow(unused)]

fn main() {

// Child tells parent about a submission

bus.push_back(Event::Submit(self.view_id, self.text.clone()));

// Child requests parent to close it

bus.push_back(Event::Close(ViewId::MarginCropper));

}Bus Bubbling

When a bus event is not handled by any ancestor view, it reaches the root and gets forwarded to the hub for processing in the next main loop iteration:

#![allow(unused)]

fn main() {

// End of main loop iteration — unhandled bus events become hub events

while let Some(ce) = bus.pop_front() {

tx.send(ce).ok();

}

}Event Dispatch

The core dispatch function in view/mod.rs controls how events flow through the tree:

flowchart TD

Start["handle_event(view, event)"] --> CheckLeaf{"Is view a leaf<br/>(no children)?"}

CheckLeaf -->|YES| HandleDirect["call view.handle_event()<br/>return result"]

CheckLeaf -->|NO| ReverseIter["Step 1 - Iterate children in REVERSE order<br/>(highest z-level first)"]

ReverseIter --> CheckChildren["For each child:<br/>call handle_event(child, event)"]

CheckChildren --> Captured{"Child returns true?"}

Captured -->|YES| SetCaptured["captured = true<br/>BREAK"]

Captured -->|NO| NextChild["Next child"]

NextChild --> CheckChildren

SetCaptured --> ProcessBus["Step 2 - Process child_bus events"]

CheckChildren -->|No more children| ProcessBus

ProcessBus --> BusEvent{"For each event<br/>on child_bus"}

BusEvent --> HandleBus["call view.handle_event(child_evt)"]

HandleBus --> ViewHandles{"View handles it?"}

ViewHandles -->|YES| RemoveBus["remove from bus"]

ViewHandles -->|NO| KeepBus["keep on bus<br/>(bubbles up)"]

RemoveBus --> BusEvent

KeepBus --> BusEvent

BusEvent -->|No more bus events| CheckCaptured{"NOT captured<br/>by any child?"}

CheckCaptured -->|YES| HandleParent["Step 3 - call view.handle_event(event)<br/>return captured || view's result"]

CheckCaptured -->|NO| ReturnCaptured["return captured"]

Key Rules

-

Reverse iteration: Children are checked from last to first (highest z-level first). This ensures overlays like dialogs and menus receive events before the views beneath them.

-

Short-circuit on capture: Once a child returns

true, no other children or the parent view receive the event. This is why the Dialog’s outside-tap handler works — it returnstrueto prevent the event from reaching views behind it. -

Parent only runs if uncaptured: The parent’s

handle_eventis only called if no child captured the event (captured || view.handle_event(...)). Ifcapturedistrue, the parent is short-circuited.

Main Loop Event Handling

The main loop (app.rs) receives events from the hub and handles them in two

stages. First, TaskManager gets a chance to observe every event — this is

where ImportLibrary, ImportFinished, and ReindexDictionaries are

intercepted to schedule or coalesce background tasks. Then the event enters the

large match statement, where some events are dispatched into the view tree

and others are handled directly:

flowchart TB

subgraph MainLoop["Main Loop"]

direction TB

Recv["rx.recv() → evt"]

TaskManager["TaskManager::handle_event()<br/>(ImportLibrary, ImportFinished, ReindexDictionaries)"]

Gesture["Event::Gesture(Tap/Swipe/...)"]

GestureAction["Dispatched into view tree via handle_event()"]

Close["Event::Close(id)"]

CloseAction["Handled directly:<br/>locate_by_id() + remove"]

Notification["Event::Notification(...)"]

NotificationAction["Handled directly:<br/>create/update Notification"]

Open["Event::Open(info)"]

OpenAction["Handled directly:<br/>push Reader onto history"]

Focus["Event::Focus(...)"]

FocusAction["Dispatched into view tree via handle_event()"]

Select["Event::Select(...)"]

SelectAction["Some handled directly,<br/>some dispatched"]

ImportLibrary["Event::ImportLibrary / ImportFinished / ReindexDictionaries"]

ImportAction["Handled by TaskManager<br/>(schedules/coalesces tasks)"]

Recv --> TaskManager

TaskManager --> Gesture

TaskManager --> Close

TaskManager --> Notification

TaskManager --> Open

TaskManager --> Focus

TaskManager --> Select

TaskManager --> ImportLibrary

Gesture --> GestureAction

Close --> CloseAction

Notification --> NotificationAction

Open --> OpenAction

Focus --> FocusAction

Select --> SelectAction

ImportLibrary --> ImportAction

end

Event::Close

Event::Close(ViewId) is handled directly by the main loop using locate_by_id(), which

searches only the top-level children of the root view:

#![allow(unused)]

fn main() {

Event::Close(id) => {

if let Some(index) = locate_by_id(view.as_ref(), id) {

let rect = overlapping_rectangle(view.child(index));

rq.add(RenderData::expose(rect, UpdateMode::Gui));

view.children_mut().remove(index);

}

}

}Key limitation: The main loop can only remove direct children of the root. To close nested views, either:

- Use the parent’s ViewId (via hub): Removes the entire parent container

- Use the bus: Parent handles the close and removes just the specific child

See Why ViewId Matters for Close and Closing Nested Views via the Bus for details.

Practical Example: Dialog Outside-Tap

When a Dialog is tapped outside its bounds, the following sequence occurs:

sequenceDiagram

actor User

participant MainLoop as Main Loop

participant ViewTree as View Tree

participant Dialog as Dialog

participant Parent as Parent View

Note over User,Parent: 1. User taps at (x, y) outside the dialog

User->>MainLoop: Tap gesture

Note right of MainLoop: 2. Receives Event::Gesture(Tap(point))

MainLoop->>ViewTree: Dispatches via handle_event()

Note over ViewTree: 3. View tree dispatch (reverse child order)<br/>Dialog checked first (highest z-level)

ViewTree->>Dialog: handle_event()

Note right of Dialog: - Tap outside self.rect<br/>- Matches outside-tap arm<br/>- Sends Event::Close(self.view_id) to hub<br/>- Returns true (captured)

Dialog-->>ViewTree: return true (captured)

Note over Parent: 4. Parent SHORT-CIRCUITED<br/>(captured = true)<br/>Parent's handle_event() never called

Note over MainLoop: 5. Next main loop iteration

MainLoop->>MainLoop: Receives Event::Close(dialog_view_id)

Note right of MainLoop: locate_by_id() finds dialog<br/>in top-level children<br/>Removes dialog from view tree

Why ViewId Matters for Close

When using the hub to close views, locate_by_id() only finds top-level children. If a

dialog is nested inside a parent, you must use the parent’s ViewId — this removes the

entire parent:

flowchart TD

Root["Root View"]

Home["Home"]

OtaView["OtaView (top-level)"]

Dialog["Dialog (nested)"]

Root --> Home

Root --> OtaView

OtaView --> Dialog

Note["hub.send(Event::Close(ViewId::Ota(Main)))<br/>→ Removes OtaView + Dialog"]

To close just the nested view without affecting siblings, use the bus instead (see below).

Closing Nested Views via the Bus

Send Event::Close via the bus to close nested views. Parents handle bus events synchronously

and can remove any child directly:

#![allow(unused)]

fn main() {

// Child sends close via bus

bus.push_back(Event::Close(ViewId::Dialog));

}sequenceDiagram

participant Child as Button

participant Dialog as Dialog (parent)

participant Grandparent as OtaView

Child->>Child: handle_event()

Child-->>Dialog: bus: Event::Close(ViewId::Dialog)

Dialog->>Dialog: Returns false (not handled)

Dialog-->>Grandparent: Event bubbles up

Grandparent->>Grandparent: Removes Dialog from children

Comparison:

| Method | Code | Handler | Scope |

|---|---|---|---|

| Hub close | hub.send(Event::Close(id)) | Main loop | Top-level children only |

| Bus close | bus.push_back(Event::Close(id)) | Parent view | Any child |

Example implementation:

#![allow(unused)]

fn main() {

impl View for Dialog {

fn handle_event(&mut self, evt: &Event, hub: &Sender<Event>, bus: &mut Bus, ...) -> bool {

match *evt {

// Return false to bubble up so grandparent removes us

Event::Close(ViewId::Dialog) => false,

_ => false,

}

}

}

}Summary

| Aspect | Hub | Bus |

|---|---|---|

| Type | mpsc::Sender<Event> | VecDeque<Event> |

| Scope | Global (main loop) | Local (parent-child) |

| Timing | Next loop iteration | Current dispatch cycle |

| Direction | View → Main loop | Child → Parent |

| Unhandled events | Processed by main loop match | Forwarded to hub |

| Use for | Close, Focus, Notifications | Submit, child-to-parent signals |

Telemetry

Cadmus has three related observability paths:

loggingfor structured log export and local log filestracingfor distributed tracing and OTLP exportprofilingfor continuous profiling with Pyroscope

Use this section when you need to understand how those features fit together, what each one depends on, and how to run them locally.

Cadmus assigns each run a unique Run ID using UUID v7. That Run ID ties together local log files, OTLP exports, and profiling data for the same app session.

Pages

Feature flags

tracingenables structured logs, distributed traces, and OTLP export.profilingenables heap and CPU profiling with Pyroscope.telemetryenables bothtracingandprofilingtogether in the app.

Architecture

The telemetry stack is split into three layers:

- Logging writes newline-delimited JSON to disk and can export log records over OTLP.

- Tracing creates spans around instrumented operations and exports them over OTLP.

- Profiling samples CPU and heap activity and pushes profiles to Pyroscope.

When telemetry is enabled, Cadmus runs all three paths together. In local

development this matches the default observability stack exposed by

devenv up.

Local setup

The development environment includes a full observability stack. Use the

cadmus-dev-otel command to run the emulator with telemetry enabled.

Logging

Cadmus writes structured JSON logs to disk and can export logs to an OTLP

backend when the tracing feature is enabled.

What it does

- Writes newline-delimited JSON log files to the configured log directory

- Adds run metadata so a single app session can be traced through log output

- Optionally exports logs to an OpenTelemetry collector

Feature flag

tracingenables OTLP log export and shared tracing/logging context

Log file format

Cadmus writes newline-delimited JSON files named like this:

cadmus-<run_id>.json

Each record includes these fields:

timestampleveltargetfieldsspans

The spans array carries active tracing context so log records can be matched

back to the traced operation that emitted them.

Resource attributes

When OTLP export is enabled, log records include the same resource metadata as traces:

service.name = cadmusservice.version = <git describe output>cadmus.run_id = <uuid-v7>hostname = <system hostname>

Configuration

See the settings reference for the full logging configuration. The main options are:

logging.enabledlogging.levellogging.max-fileslogging.directorylogging.otlp-endpoint

OTEL_EXPORTER_OTLP_ENDPOINT overrides logging.otlp-endpoint when both are

set.

RUST_LOG overrides the configured log level. This is useful when you need

trace-level output for a single subsystem without editing Settings.toml.

# Enable debug logs globally

RUST_LOG=debug cargo run --features tracing

# Enable trace logs for a specific module

RUST_LOG=cadmus_core::view=trace,info cargo run --features tracing

Runtime behavior

When tracing is enabled, log export is initialized during app startup and

shut down during app exit.

Cadmus always writes local JSON logs. OTLP export is an additional sink layered on top when an endpoint is configured.

Related docs

Tracing

Cadmus uses tracing instrumentation to capture execution flow through the

app and export spans to an OTLP backend.

What it does

- Adds spans around key operations in the app and core crates

- Captures timing, fields, and parent-child relationships between spans

- Exports traces to an OpenTelemetry collector when configured

Feature flag

tracingenables tracing support in the codebase

Instrumentation

Most view and runtime chokepoints are instrumented with conditional compilation so tracing can be compiled out when the feature is disabled.

Each instrumented function can capture:

- function and module name

- selected input fields

- execution duration

- return values at

TRACElevel - parent-child span relationships

View code is instrumented around two high-value paths:

handle_eventmethods for event flow through the UI treerendermethods for rendering and layout timing

For detailed instrumentation conventions, see

.github/instructions/rust-instrumentation.instructions.md.

Example: instrument a function

Use #[cfg_attr(feature = "tracing", tracing::instrument(...))] on functions

that should create a span for each call.

#![allow(unused)]

fn main() {

#[cfg_attr(

feature = "tracing",

tracing::instrument(skip(self, data), fields(book_id, size = data.len()))

)]

fn save_cover(&self, book_id: i64, data: &[u8]) -> Result<(), Error> {

tracing::debug!(book_id, "Saving cover image");

self.storage.save(book_id, data)?;

Ok(())

}

}Use skip(...) for large values, borrowed buffers, or types with noisy Debug

output. Add fields(...) for the identifiers you will actually query in Tempo

or logs.

Example: instrument a closure

For a short synchronous closure, create a span and run the closure inside it

with in_scope().

#![allow(unused)]

fn main() {

let sorted = tracing::info_span!("sorting", entry_count = entries.len()).in_scope(|| {

let mut entries = entries;

entries.sort_unstable();

entries

});

}This is the usual pattern when you want to time a single closure body without extracting it into a separate function.

OTLP export

When tracing is enabled, Cadmus initializes a tracer provider that exports to

<endpoint>/v1/traces using batch span processors.

This gives you distributed traces in backends like Tempo, Jaeger, or any other OTLP-compatible collector.

Resource attributes

Each exported span includes shared process metadata:

service.name = cadmusservice.version = <git describe output>cadmus.run_id = <uuid-v7>hostname = <system hostname>

Configuration

See the settings reference for the full

logging and OTLP configuration. The main option is logging.otlp-endpoint.

OTEL_EXPORTER_OTLP_ENDPOINT overrides logging.otlp-endpoint when both are

set.

To build Cadmus with tracing support:

cargo build --features tracing

Related docs

Profiling

Cadmus supports continuous profiling with Pyroscope when the profiling

feature is enabled.

What it does

- Uses jemalloc heap profiling for allocation data

- Uses pprof for CPU profiling data

- Pushes both profile types to Pyroscope

Feature flags

profilingenables profiling support in the codebasetelemetryenables profiling together with tracing

Runtime behavior

Profiling is initialized during app startup and shut down during app exit. If a Pyroscope endpoint is configured, Cadmus starts collecting profiles right away so early startup work is included.

The app configures jemalloc as the global allocator when profiling is enabled

and enables heap profiling at process startup. CPU profiling runs alongside it

through the Pyroscope agent.

Configuration

See the settings reference for the full logging and profiling configuration.

logging.pyroscope-endpointPYROSCOPE_SERVER_URL

PYROSCOPE_SERVER_URL overrides logging.pyroscope-endpoint when both are

set.

To build Cadmus with profiling support:

cargo build --features profiling

To build Cadmus with tracing and profiling together:

cargo build --features telemetry

Profile types

Cadmus currently exports:

- heap allocation profiles via jemalloc

- CPU profiles via pprof-rs

Both profile streams are pushed to the configured Pyroscope server.

Local development

The cadmus-dev-otel command starts the emulator with profiling enabled and

the local Pyroscope service available at http://localhost:4040.

With the full devenv stack running, traces go to Tempo, logs go to Loki, and profiles go to Pyroscope. That makes it possible to correlate a single run across all three observability backends.

Documentation Deployment

Cadmus documentation is deployed to Cloudflare Pages.

URLs

- Production: https://cadmus-dt6.pages.dev/

- PR Preview:

https://pr-{NUMBER}.cadmus-dt6.pages.dev/

Reviewing Documentation Changes

When you open a pull request that modifies documentation files, a preview deployment is automatically created. The PR will show a deployment status with a link to the preview URL.

Preview URLs follow the pattern: https://pr-{NUMBER}.cadmus-dt6.pages.dev/

Local Development

Building and Serving

Build and serve documentation locally:

devenv shell

cargo xtask docs # Build all documentation

cadmus-docs-serve # Serve at http://localhost:1111

cargo xtask docs handles the full pipeline: installing Mermaid assets, building mdBook,

generating Rust API docs, and assembling the Zola portal. Pass --mdbook-only to skip the

Zola step when you only need to check the mdBook output.

To serve with live reload after building:

cd docs-portal && zola serve --base-url http://localhost

Build Process

Documentation is built from three sources:

- mdBook (

docs/) - User and contributor guides - Cargo doc (

crates/) - Rust API documentation - Zola (

docs-portal/) - Documentation portal that combines everything

The build is orchestrated by cargo xtask docs (see xtask/src/tasks/docs.rs). The GitHub

Actions workflow (.github/workflows/cadmus-docs.yml) runs this command automatically on every

push to main and for every pull request.

Translations

Cadmus has two separate translation systems, each covering a different part of the project.

| What | System | Files |

|---|---|---|

| Documentation | GNU gettext / PO | docs/po/*.po |

| UI strings | Fluent (FTL) | crates/core/i18n/**/*.ftl |

Pick the guide that matches what you want to translate.

Translating Source Strings

The Cadmus UI uses Fluent for all user-visible strings. Translations are embedded directly into the binary at compile time — no external files are needed on the device.

How it works

- FTL files live under

crates/core/i18n/<lang-tag>/cadmus_core.ftl. - The fallback language is en-GB; any string missing from a translation falls back to the English text automatically.

crates/core/src/i18n.rsloads the correct language at startup based onsettings.locale.- The

fl!("message-id")macro resolves message IDs at compile time.

Adding a new language

1. Create the FTL file

Create a new file at the path matching the BCP 47 tag for your language:

crates/core/i18n/<lang-tag>/cadmus_core.ftl

For example, for French:

crates/core/i18n/fr/cadmus_core.ftl

2. Copy and translate the English strings

Use the English fallback file as your starting point:

crates/core/i18n/en-GB/cadmus_core.ftl

Translate each message value. The message ID (left of =) must stay

unchanged — only the value (right of =) changes:

# en-GB

startup-loading = Cadmus starting up…

# fr

startup-loading = Chargement de Cadmus…

3. Set the locale in Settings

To activate the new language locally during development, add a locale key to

your Settings.toml:

locale = "fr"

4. Build and verify

cargo check -p cadmus-core

The fl!() macro validates all message IDs at compile time. A successful build

confirms the FTL file is well-formed and all IDs referenced in code are present.

Adding a string to the source code

When you add a new UI string:

-

Add the message to

en-GB, and any other languages you know how to translate it into. -

Use the

fl!()macro in the Rust source:#![allow(unused)] fn main() { let label = crate::fl!("my-new-message"); } -

For messages with variables, use named arguments:

# In the FTL file books-loaded = Loaded { $count } books#![allow(unused)] fn main() { let label = crate::fl!("books-loaded", count = book_count); }

FTL file format

Fluent uses a straightforward syntax. A few rules to keep in mind:

- Message IDs use

kebab-case. - Values can span multiple lines by indenting continuation lines.

- Use Unicode characters directly — no escaping needed.

- Comments start with

#.

For the full syntax reference see the Fluent syntax guide.

Translating Cadmus Documentation

This guide explains how to translate the Cadmus documentation into other

languages. Translations live in docs/po/ as standard GNU gettext PO files.

Prerequisites

If you are using the devenv environment, all required tools are already

available:

mdbook-xgettext/mdbook-gettext— string extraction and preprocessingmsginit/msgmerge/msgfmt— gettext utilities (fromgettext)poedit— graphical PO editor

Install them outside devenv with cargo install mdbook-i18n-helpers and your

system’s gettext package.

Adding a new language

1. Extract the POT template

Run the cadmus-translate script (devenv) or the equivalent command:

cadmus-translate

This writes docs/po/messages.pot — the source template every translation

derives from. Commit this file whenever the English source changes so

translators have an up-to-date starting point.

2. Create a PO file for your locale

# Replace 'fr' with the BCP 47 language tag you are adding.

msginit --input=docs/po/messages.pot \

--output-file=docs/po/fr.po \

--locale=fr

Open docs/po/fr.po and set the Language-Name header so the language picker

displays a readable label:

"Language-Name: Français\n"

The xtask reads this header when generating locales.json; without it the

locale code (e.g. fr) is shown instead.

3. Translate the strings

Open the PO file in Poedit or any text editor and fill in each msgstr:

msgid "Welcome to Cadmus!"

msgstr "Bienvenue dans Cadmus !"

Preserve Markdown formatting — bold, code spans, links — exactly as in the

msgid. Untranslated or fuzzy entries fall back to the English source.

4. Build and preview

# Build everything including translated books

cargo xtask docs --base-url http://localhost

# Serve

cd docs-portal

zola serve

Navigate to http://localhost:1111/guide/ and use the language picker in the sidebar

to switch to your locale.

Keeping translations up to date

When English source files change, regenerate the template and merge new strings into existing PO files:

cadmus-translate # regenerate docs/po/messages.pot

msgmerge --update docs/po/fr.po docs/po/messages.pot

Excluding content from extraction

Wrap any block you want to keep in English with <!-- i18n:skip --> comments:

<!-- i18n:skip -->

This paragraph will not appear in the POT file.

How the build works

cargo xtask docscallsmdbook build -d book/<lang>for each.pofile found indocs/po/, passingMDBOOK_BOOK__LANGUAGE=<lang>.- The

[preprocessor.gettext]indocs/book.tomlsubstitutes translated strings at build time. locales.jsonis written todocs/book/html/with the available locales;lang-picker.jsfetches it at runtime to populate the language dropdown.- Symlinks under

docs-portal/static/guide/<lang>/expose each locale build to Zola so it is served at/guide/<lang>/.

SQLite & SQLx

Cadmus uses SQLite as its embedded database and SQLx as the Rust database library. SQLx provides compile-time SQL verification — every query is checked against the real schema before the code ships.

The .sqlx directory

The .sqlx/ directory at the repository root contains one JSON metadata file

per SQL query. Each file stores the resolved column names, types, and parameter

types that SQLx inferred from the live database schema at the time

cargo sqlx prepare was last run.

.sqlx/

├── query-10c2db2a….json ← compile-time metadata for one query

├── query-13c26d81….json

└── …

Regenerating query metadata

After adding or changing any SQL query, regenerate the metadata:

cargo sqlx prepare --all --workspace

This connects to the database, re-introspects every query macro in the

workspace, and rewrites the .sqlx/ JSON files. Commit the updated files

alongside your code change.

Important

If you forget to run

cargo sqlx prepare, the CIcheckjob will fail because the cached metadata will be out of date with your query changes.

Compile-time SQL checking

SQLx’s typed query macros (query!, query_as!, query_scalar!) verify SQL at

compile time using the metadata in .sqlx/. This means:

- Typos in column names are compiler errors, not runtime panics.

- Binding the wrong type to a

?placeholder is a type error. - Adding or removing a column in a migration without updating queries is caught before deployment.

The macros require the DATABASE_URL environment variable to point at a live

database when running cargo sqlx prepare, but not during regular cargo build or cargo check — those use the pre-generated .sqlx/ files.

Important

.sqlx/is only used when theSQLX_OFFLINE=truefield is set which is the default if you’re using devenv.nix.

Review rules

The following rules are enforced during code review for all SQLx queries.

Use typed macros only

Always use the typed macros. Never call the untyped query(), query_as(), or

query_scalar() functions:

| Goal | Use |

|---|---|

INSERT, UPDATE, DELETE, raw SELECT | sqlx::query! |

SELECT mapped into a named struct | sqlx::query_as! |

Single-column SELECT | sqlx::query_scalar! |

When the column is nullable, call .flatten() on the result to collapse

Option<Option<T>> into Option<T>:

#![allow(unused)]

fn main() {

let id: Option<i64> =

sqlx::query_scalar!("SELECT id FROM libraries WHERE path = ?", path)

.fetch_optional(pool)

.await?

.flatten();

}List explicit column names

Never use SELECT *. Always name every column you need:

-- ✅ Good

SELECT id, path, name FROM libraries WHERE id = ?

-- ❌ Bad

SELECT * FROM libraries WHERE id = ?

Store timestamps as Unix epoch integers

All date/time values must be stored as INTEGER NOT NULL (Unix epoch seconds).

Do not use TEXT columns for timestamps:

-- ✅ Good

created_at INTEGER NOT NULL

-- ❌ Bad

created_at TEXT NOT NULL DEFAULT (datetime('now'))

Add only indexes that are actively used

Every index must be used by at least one query in the codebase. Unused indexes waste write performance and storage without any read benefit. Before adding an index, verify a query filters, sorts, or joins on the indexed column(s).

API reference

The primary database types live in the cadmus_core::db module:

cadmus_core::db::Database— the top-level sync/async bridge that owns the connection poolcadmus_core::db::migrations::MigrationRunner— executes all pending runtime migrationscadmus_core::migration!— macro for declaring one-time runtime migrations

See Library Database for how the library subsystem uses the database, and Runtime Migrations for how to write one-time data migrations.

Library Database

The library subsystem stores all book metadata, reading progress, and table-of-contents data in SQLite. This page explains the schema, the key database types, and how data flows from disk into the database.

Schema overview

The database is created and versioned by the SQL migration files in

crates/core/migrations/. The initial schema defines eleven tables plus one

aggregating view:

erDiagram

books {

TEXT fingerprint PK

TEXT title

TEXT file_path

TEXT file_kind

INTEGER file_size

INTEGER added_at

}

authors {

INTEGER id PK

TEXT name

}

book_authors {

TEXT book_fingerprint FK

INTEGER author_id FK

INTEGER position

}

categories {

INTEGER id PK

TEXT name

}

book_categories {

TEXT book_fingerprint FK

INTEGER category_id FK

}

reading_states {

TEXT fingerprint PK

INTEGER opened

INTEGER current_page

INTEGER pages_count

INTEGER finished

}

thumbnails {

TEXT fingerprint PK

BLOB thumbnail_data

}

toc_entries {

TEXT id PK

TEXT book_fingerprint FK

TEXT parent_id FK

INTEGER position

TEXT title

TEXT location_kind

}

libraries {

INTEGER id PK

TEXT path

TEXT name

INTEGER created_at

}

library_books {

INTEGER library_id FK

TEXT book_fingerprint FK

INTEGER added_to_library_at

}

_cadmus_migrations {

TEXT id PK

INTEGER executed_at

TEXT status

}

books ||--o{ book_authors : ""

authors ||--o{ book_authors : ""

books ||--o{ book_categories : ""

categories ||--o{ book_categories : ""

books ||--o| reading_states : ""

books ||--o| thumbnails : ""

books ||--o{ toc_entries : ""

toc_entries ||--o{ toc_entries : "parent_id"

libraries ||--o{ library_books : ""

books ||--o{ library_books : ""

Key design choices

booksis the main table. Every other per-book table referencesbooks.fingerprintwithON DELETE CASCADE, so deleting a book row removes all associated data automatically.- Authors are normalised.

authorsholds unique author names;book_authorsis the join table and carries apositioncolumn that preserves display order. - All tables use

STRICTmode. SQLite’sSTRICTpragma enforces column type constraints at the storage layer, catching type mismatches early. - Timestamps are Unix epoch integers.

added_at,created_at, and similar columns areINTEGER NOT NULL; neverTEXT. - TOC tree via adjacency list.

toc_entries.parent_idis a self-reference;positionpreserves sibling order. Theidis a UUID7 (generated in Rust) soORDER BY id ASCgives stable insertion order without a growing rowid. library_books_full_infoview. An aggregating view joinsbooks,reading_states,book_authors,authors,book_categories, andcategoriesin one query. Thelibrary_idcolumn fromlibrary_booksis exposed so callers can filter with a plainWHERE library_id = ?.

Data access layer

The

cadmus_core::library::db::Db

struct is the entry point for all library database operations. It wraps the

shared SqlitePool and exposes a synchronous API by bridging every async

SQLx call through the global Tokio runtime:

flowchart LR

caller["Caller (sync event loop)"]

Db["library::db::Db"]

RUNTIME["RUNTIME.block_on()"]

SQLx["SQLx async query"]

SQLite[("SQLite")]

caller -->|sync call| Db

Db -->|wraps in| RUNTIME

RUNTIME -->|awaits| SQLx

SQLx -->|reads/writes| SQLite

SQLx -->|result| RUNTIME

RUNTIME -->|returns| caller

The sync bridge exists because Cadmus’s UI event loop is single-threaded and

synchronous. The global RUNTIME (a tokio::runtime::Runtime singleton) lets

the rest of the codebase call database methods without needing to be async.

Key methods on Db:

| Method | Purpose |

|---|---|

register_library | Insert a new library row and return its id |

get_library_by_path | Look up a library id by filesystem path |

get_all_books | Fetch every book in a library via the full-info view |

insert_book | Write a new book and its authors/categories |

save_reading_state | Save or update reading progress for a book |

save_toc | Bulk-write a book’s table of contents |

get_thumbnail | Retrieve the stored cover thumbnail BLOB |

save_thumbnail | Save or replace a cover thumbnail |

How a book scan flows into the database

When a library directory is scanned, Cadmus follows this sequence:

sequenceDiagram

participant Scanner as Library Scanner

participant Db as library::db::Db

participant SQLite

Scanner->>Db: register_library(path, name)

Db->>SQLite: INSERT INTO libraries

SQLite-->>Db: library_id

loop for each book file

Scanner->>Db: insert_book(library_id, fp, info)

Db->>SQLite: INSERT INTO books

Db->>SQLite: INSERT INTO authors / book_authors

Db->>SQLite: INSERT INTO book_categories

Db->>SQLite: INSERT INTO library_books

end

loop for each book with reading progress

Scanner->>Db: save_reading_state(fp, reader_info)

Db->>SQLite: INSERT OR REPLACE INTO reading_states

end

loop for each book with a TOC

Scanner->>Db: save_toc(fp, entries)

Db->>SQLite: INSERT INTO toc_entries

end

Related pages

- SQLite & SQLx — compile-time query verification, review rules

- Runtime Migrations — one-time data migrations using

the

migration!macro

Runtime Migrations

Cadmus has two distinct migration pipelines:

- Schema migrations — plain

.sqlfiles incrates/core/migrations/, applied by SQLx’smigrate!macro at startup. Use these forCREATE TABLE,ALTER TABLE, and similar DDL changes. - Runtime migrations — Rust

async fnblocks declared with themigration!macro. Use these for one-time data operations: backfilling columns, importing legacy files, cleaning up obsolete rows, or any procedural work that goes beyond SQL DDL.

How runtime migrations work

flowchart TD

ctor["#[ctor] runs at process start"]

registry["Global REGISTRY HashMap<br>(migration id → async fn)"]

startup["Database::migrate() called on startup"]

schema["sqlx::migrate!() — applies .sql files"]

runner["MigrationRunner::run_all()"]

table["_cadmus_migrations table<br>(id, executed_at, status)"]

pending["Filter: id NOT IN already-succeeded rows"]

exec["Execute each pending migration in id order"]

record["Record success or failure in _cadmus_migrations"]

ctor --> registry

startup --> schema

schema --> runner

runner --> table

table --> pending

pending --> exec

exec --> record

At process start the #[ctor] attribute runs for every migration! call and

inserts the migration function into a global HashMap. When Database::migrate

is called during application startup, it first applies all pending SQL schema

migrations, then calls MigrationRunner::run_all(), which:

- Reads

_cadmus_migrationsand collects IDs that already succeeded. - Skips those; runs the remaining ones sorted by ID.

- Records each result (

successorfailed) before moving on. - Continues past failures so one broken migration does not block others.

A failed migration can be retried by deleting its tracking row (see Re-running a migration).

The migration! macro

cadmus_core::migration! takes a

stable string ID and an async fn definition:

#![allow(unused)]

fn main() {

cadmus_core::migration!(

/// One-line doc comment forwarded to rustdoc.

"v1_my_migration",

async fn my_migration(pool: &SqlitePool) {

sqlx::query!("UPDATE books SET title = TRIM(title)")

.execute(pool)

.await?;

Ok(())

}

);

}The macro:

- Generates the

async fnwith the provided body. - Creates a public submodule named after the function that exposes a

MIGRATION_IDconstant — useful for tests and cross-references. - Registers the function in the global

REGISTRYvia#[ctor]so it runs automatically without any manual wiring. - Forwards doc comments onto the generated items so rustdoc picks them up.

- Appends the migration ID and a re-run SQL snippet to the generated docs.

Where to put migration code

Co-locate migrations with the feature they belong to. The library subsystem’s

migrations live in crates/core/src/library/migrations.rs; a hypothetical

reader subsystem would put its migrations in

crates/core/src/reader/migrations.rs.

The module only needs to be declared once so the #[ctor] registration runs.

There is no central registry file to update.

Writing a migration step by step

1. Choose a stable ID

The ID is the primary key in _cadmus_migrations. Once a migration has been

deployed it must never be renamed, because existing installations track it by

this string.

Convention: v<N>_<short_description>, for example v1_backfill_book_language.

2. Create the migration file (or add to an existing one)

#![allow(unused)]

fn main() {

// crates/core/src/my_feature/migrations.rs

use sqlx::SqlitePool;

cadmus_core::migration!(

/// Backfills the `language` column for books that were imported before

/// language detection was added.

"v1_backfill_book_language",

async fn backfill_book_language(pool: &SqlitePool) {

sqlx::query!(

"UPDATE books SET language = 'en' WHERE language = '' OR language IS NULL"

)

.execute(pool)

.await?;

Ok(())

}

);

}3. Use SQLx typed macros

All SQL inside a migration must use the typed macros for compile-time verification (see SQLite & SQLx):

#![allow(unused)]

fn main() {

// ✅ Good — compile-time checked

sqlx::query!("DELETE FROM books WHERE file_path = ?", path)

.execute(pool)

.await?;

// ❌ Bad — untyped, bypasses verification

sqlx::query("DELETE FROM books WHERE file_path = ?")

.bind(path)

.execute(pool)

.await?;

}4. Make it idempotent

Use INSERT OR IGNORE, ON CONFLICT DO NOTHING, or guard with a WHERE clause

so the migration is safe to re-run without corrupting data.

5. Regenerate .sqlx metadata

After adding or changing any query in the migration, regenerate the compile-time metadata:

cargo sqlx prepare --all --workspace

Commit the updated .sqlx/ files alongside your migration code.

Re-running a migration

Delete the tracking row, then restart the application:

DELETE FROM _cadmus_migrations WHERE id = 'v1_my_migration';

The next startup will treat the migration as pending and run it again.

API reference

cadmus_core::migration!— macro for declaring runtime migrationscadmus_core::db::migrations::MigrationRunner— executes all pending registered migrationscadmus_core::db::Database— owns the connection pool and orchestrates both migration stages

Investigations

When something needs investigating, the investigation and its results are documented here. This is to ensure that the investigation is not lost and can be referred to in the future if needed.

| Date | Platform | Title |

|---|---|---|

| 2026-03-24 | Kobo | DHCP IP Address Changes on WiFi Toggle |

DHCP IP Address Changes on WiFi Toggle

After identifying that killing the original dhcpcd and replacing it with

udhcpc caused the issue reported in #51,

I confirmed the fix works in testing but wanted to understand the root cause